🤖Diving into Prompt Engineering: Assessing ChatGPT and Bard for Python Programming😎(by @ace108)

Woke up too early in the morning but not early enough to try to fall asleep again. I decided to whip up and my phone and do a bit of experiment to compare how well ChatGPT do against Bard in Python programming.

🎶Meanwhile, some music for you to enjoy as you read. This piece is part of Vivaldi’s The Four Seasons, particularly the first of the 4 violin concertos, called Spring.

🎶让大家继续读的时候,欣赏一点音乐。这件作品是维瓦尔第的“四季”的一部分 - 4小提琴协奏曲中的第一首,名为“春天”。

Source | 来源: https://musopen.org/

https://soundcloud.com/alan-chang-563971986/four-concerti-grossi-4-vivaldi-op8-i-la-primavera-allegro-largo-allegro

Source | 来源: https://musopen.org/music/2213/antonio-vivaldi/the-four-seasons/

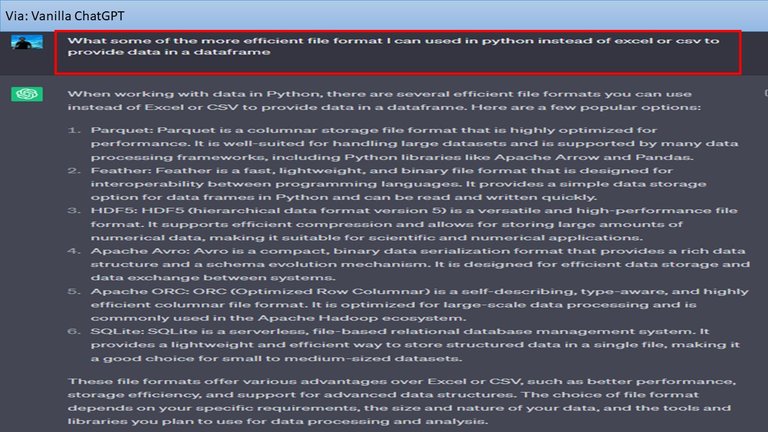

Half awake (which explained the typo and grammar error), I tried to prompt engineer ChatGPT to tell my the other file format to consider and it listed a few.

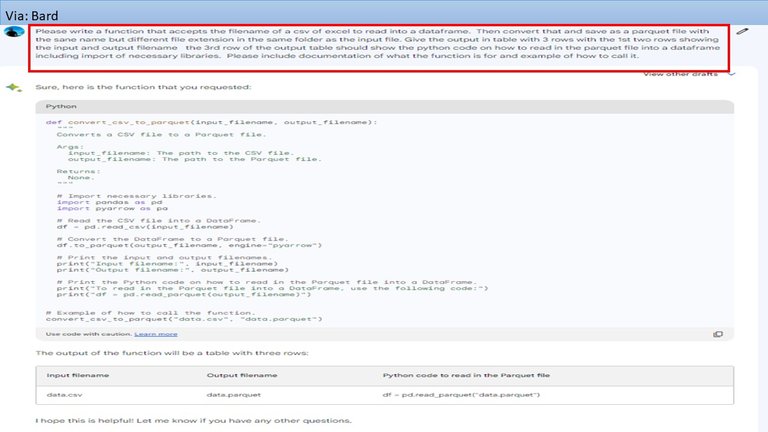

I copied the same prompt and run it through Bard and the list is slightly different but the first 3 are the same.

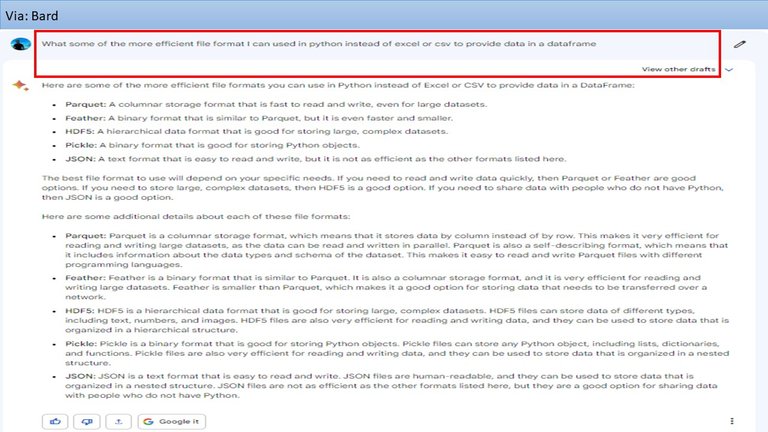

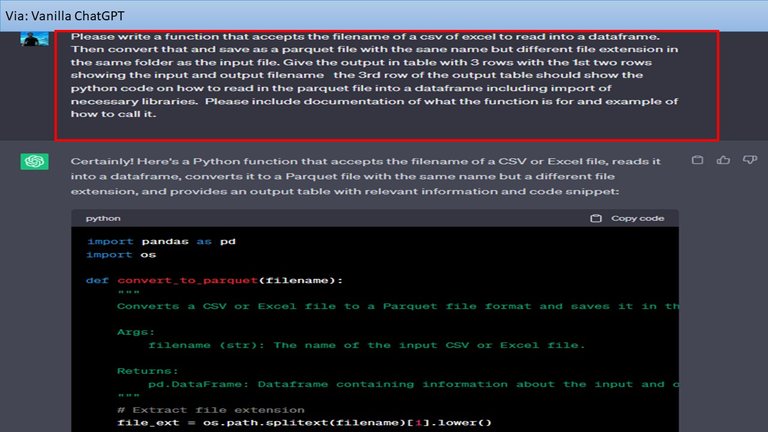

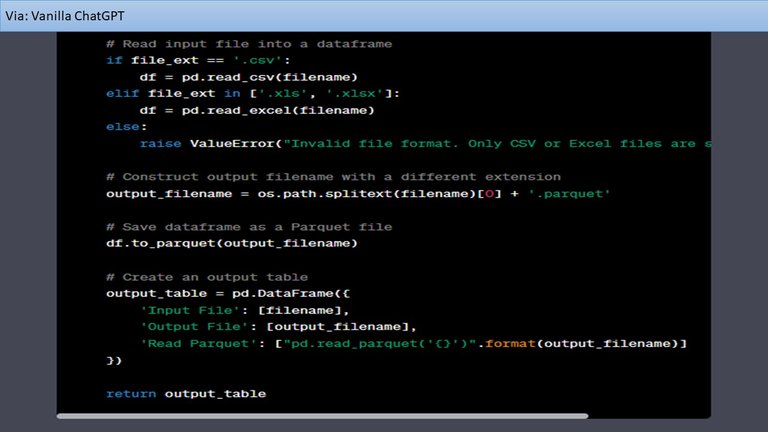

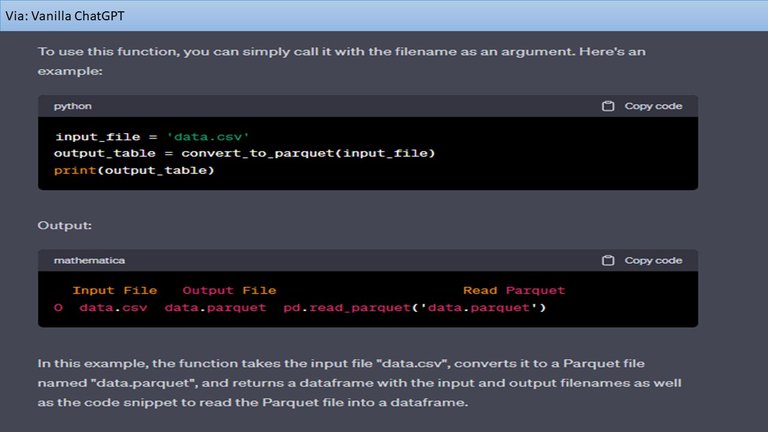

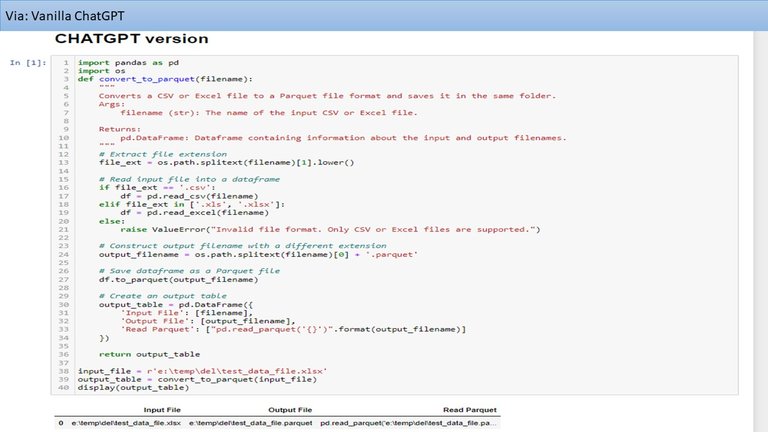

Then I went back to ChatGPT and asked to create a Python function to convert a CSV or (with a typo of "of" instead of "or") Excel file to Parquet with the same filename in the same file folder as the input file and give a table with 3 rows of output with the first two rows showing the input and output filenames and the 3rd row showing the Python code I can use to read in the created Parquet file.

It spat out the code quite fast.

I didn't look at it in detail.

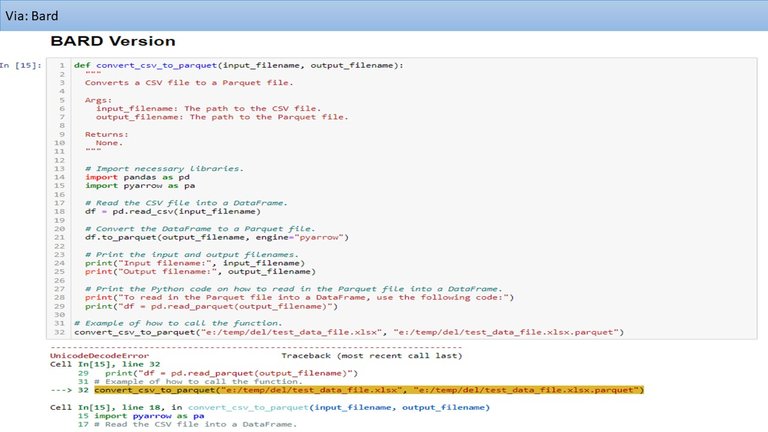

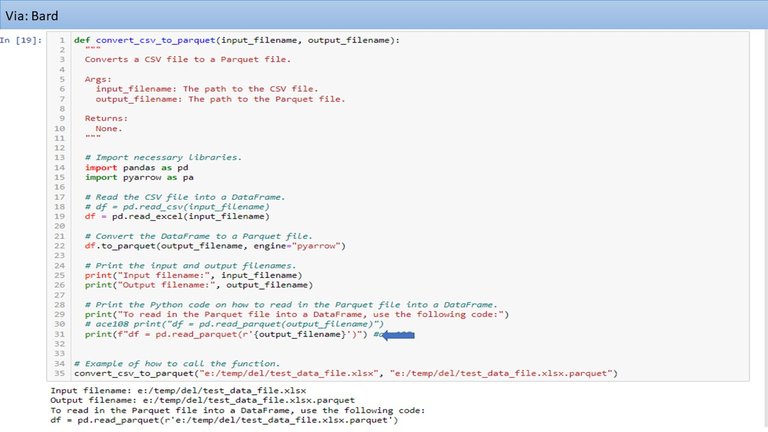

Then I copied the prompt and run it through Bard. The code was also spat out quite fast.

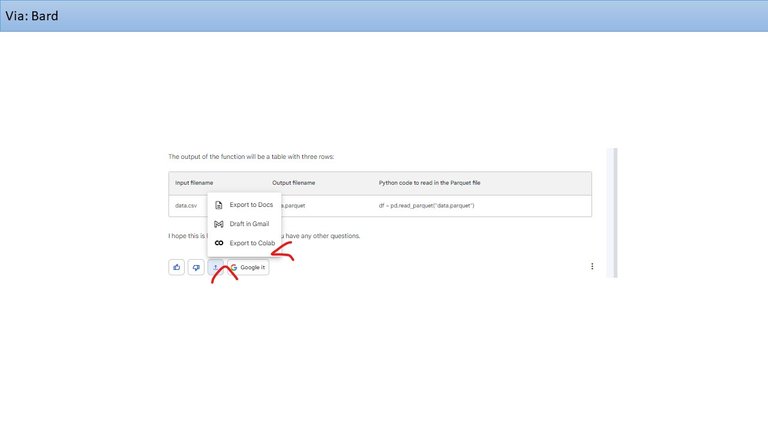

What I saw which wasn't there before was an option to export to Colab which I thought was pretty cool.

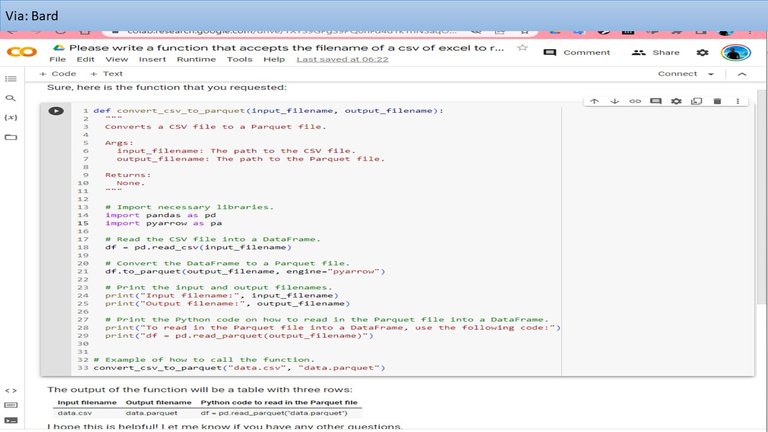

So I tried that but I didn’t run it because I didn't have a file for it read.

After I got up, I decided to test the code for ChatGPT first. The output table came out with 3 columns instead of 3 rows I asked out.

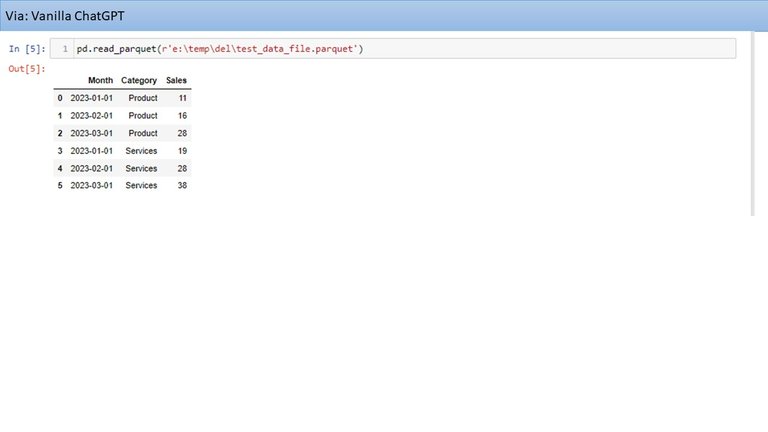

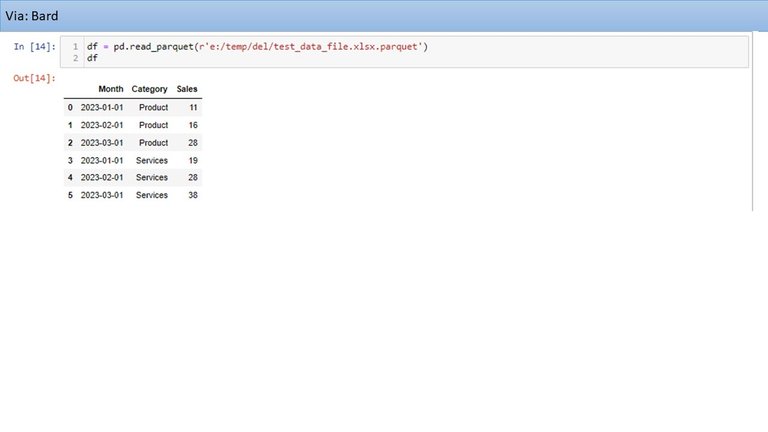

I tested the Python code generated from the program to read the Parquet file that was created and it worked quite well.

Then I tried the program created by Bard which gave an error because it was reading my Excel file as a CSV. I guessed it didn't like the typo in my promt.

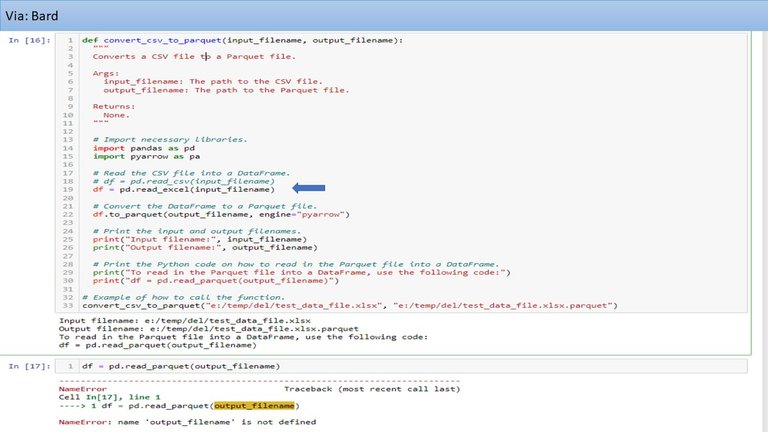

I fixed that and got a different error. So, I had to do a bit more debugging.

Finally, I tried the the Python code generated from the program to read the Parquet file that was created.

It came out well.

Based on this limited experiment, it is probably not fair to to make a definitive conclusion about which model is better. ChatGPT produced closed to the desired Python code well including accomodating my typo and grammar error but had an issue. Bard didn't do well probably due to typo in the prompt but produced worked after a bit of debugging. Additionally, Bard offered a unique feature to export to Colab, which was nice. So, I think I'd go to ChatGPT first and if the result is good enough, then I can skip Bard.

请看我其他帖: @ace108

0

0

0.000

Seeing the development of the AI, probably it is good to check other solutions from time to time. Probably there will be better solutions over time. And probably the ChatGPT and the Bard will also be improved over time. And the results can also be different from task to task. There could be tasks, in which Bard is (or will be) better than ChatGPT. After all, it is an expressedly experimental conversational AI service.

Things are moving so fast. The competition must be intence.

tried one of the graphic art ones they turn out some interesting stuff lol

I was wanting to try mid-journey but having been busy. I tried bit of dall-e and craiyon but I don't have fancy requires and then https://bing.com/create came along which works well for occasional needs. Now, I just prompt the bing chat directly. It generates 4 images for review quite well.

Interesting. Rehived.

Thanks

@tipu curate

Upvoted 👌 (Mana: 16/66) Liquid rewards.

Thank you

bard is really good, due it is free i like more bard than chatgpt

I think it's still useful. As tbey try to outdo each other, i hope we all benefit from it.

It's good to do comparison to whether there are much difference. From your research, I believe there are not much difference though they varies.

I think they have different strengths. So, sometimes have to try both

AI is everything these days wow 😮

🥦 !HUG 🥦

@mizuosemla, sorry!

You are out of hugs for today.

You can call the HUG bot a maximum of 3 per day.

The current call limits are: